Beyond Data Contracts

We need to integrate data contracts directly into data systems

A data contract is a specification that outlines how data consumers and producers interact with each other.

Its main components include:

Metadata: Basic information about the contract, including its name, version, owner, and status

Schema: The structure of the data, including columns, data types, and required fields

Semantics: Definitions of what the data means and how it should be interpreted

Quality Rules: Expectations for clean and reliable data, such as uniqueness, valid ranges, and completeness

Freshness / SLAs: How often the data is updated and how quickly it should be available

Ownership: The people or teams responsible for maintaining the data

Infrastructure: Where the data lives and how it is accessed, such as databases, APIs, or message queues

Business Rules: Important logic or calculations applied to the data

Compatibility: Rules for safely changing the data without breaking downstream systems

Governance: Policies around security, privacy, and proper usage of the data

Data contracts are extremely useful because they allow you to distill the complexity of data systems into a machine readable yaml format.

In their current form, treating data contracts as documentation is a good first step towards standardization.

Modern data platforms are highly fragmented across databases, warehouses, APIs, orchestration tools, and BI systems.

While data contracts help standardize metadata and expectations, most integrations between these systems still need to be implemented and maintained manually.

One area I’m curious about is how these specifications could be integrated directly into data systems.

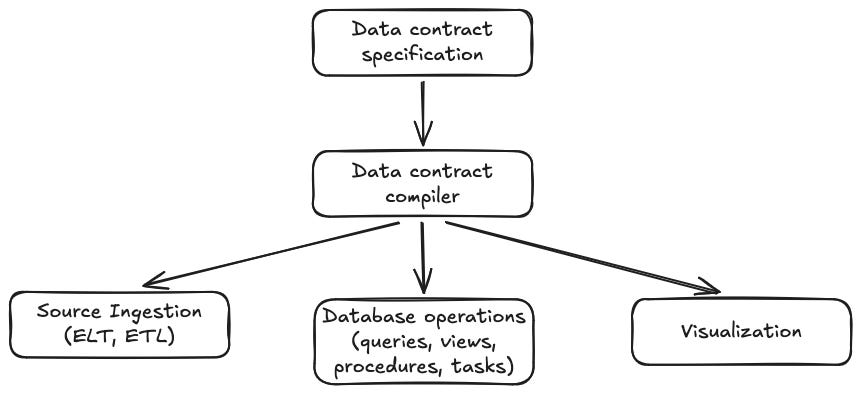

In theory, this could be done via a “data contract compiler” that generates specification integration plans for each target system.

Integrating this specification would take a significant amount of effort.

With tools like data contract cli, you can generate specifications directly with python so that part is mostly solved.

The data contract as defined here would also need to be extended with these custom fields:

Connections: Define where the data comes from and where it should go, including databases, APIs, warehouses, and authentication details

Ingestion Rules: Define how data should be loaded, such as full refreshes, incremental updates, change tracking, and refresh schedules

Infrastructure Targets: Specify where the final data should live, such as Snowflake tables, SQL Server databases, or cloud storage locations

Pipeline Definitions: Describe how data moves through the system and which datasets depend on each other

Transformations: Define how the data should be cleaned, joined, filtered, or calculated using SQL, Python, dbt, or other logic

Execution / Orchestration: Define when pipelines should run, retry behavior, and execution order between jobs

Schema Evolution Rules: Define how schema changes should be handled, such as adding new columns safely or blocking breaking changes

Secrets / Credentials References: Reference secure credential storage without placing passwords or keys directly in the specification

Monitoring & Alerts: Define checks for freshness, row counts, failures, anomalies, and notifications when issues occur

Semantic Layer Metadata: Define business-friendly metrics, dimensions, relationships, and definitions used in dashboards or AI systems

Deployment Metadata: Define environments like development, staging, and production, along with rules for promoting changes between them

From there, you could develop a general interface Python library that implements connections to different data systems such as Airbyte, SQL Server, and Snowflake.

An integrated, executable data contract system could dramatically reduce manual engineering effort while improving consistency, reliability, governance, and reproducibility across the entire data platform.

I’m interested in exploring this idea further over the next year, especially around creating tooling that can use these specifications to automatically build and coordinate modern data platforms.